Technology · Analysis

How are AI agents and autonomous systems evolving?

Understanding How are AI agents and autonomous systems evolving? and its role in the energy industry.

Stake & Paper Editorial TeamApril 28, 2026

In 2026, agentic AI is expected to evolve from reactive assistants into autonomous systems capable of planning, executing, and adapting complex tasks with minimal human intervention.

This represents a fundamental shift in artificial intelligence—moving beyond tools that respond to user prompts toward systems that independently perceive their environment, reason about goals, and take action to achieve them.

Key Points

-

Agentic AI refers to a new generation of artificial intelligence systems that can act autonomously, make decisions, and execute tasks with minimal human intervention, moving beyond simple prompt-based tools.

-

AI agents now independently plan, decide, and execute tasks with minimal human input, and in many cases, multiple agents collaborate in real time to achieve a shared goal, making them feel more like digital coworkers than simple tools.

-

While Copilot functions as an assistant that responds to user prompts, Agent 365 represents autonomous systems capable of initiating actions, making decisions, and managing entire processes.

-

In 2026, AI Agents are primarily deployed where processes are repetitive but not always identical, involving unstructured inputs such as emails, PDFs, or support tickets combined with data distributed across multiple systems, and analyses describe agents as particularly helpful when they connect multiple systems, consolidate information, and transparently document their work steps.

-

The main barrier for shifting Agentic AI from pilots to production is governance, as accountability and control remain unclear for systems that act independently.

Understanding AI Agents and Autonomous Systems

An artificial intelligence (AI) agent is a system that autonomously performs tasks by designing workflows with available tools, and AI agents can encompass a wide range of functions beyond natural language processing including decision-making, problem-solving, interacting with external environments and performing actions.

The distinction between AI agents and traditional AI systems lies in their operational approach.

While generative AI automates the creation of complex text, images, and video based on human language interaction, AI agents go further, acting and making decisions in a way a human might.

AI agents take in information from their surroundings, run it through algorithms, and use that to make decisions based on goals they were given, act on those decisions, observe the outcomes, and use it to get a better result next time.

This feedback loop distinguishes autonomous agents from static systems.

Agentic technology uses tool calling on the backend to obtain up-to-date information, optimize workflows and create subtasks autonomously to achieve complex goals.

The evolution toward agentic AI reflects broader changes in enterprise expectations.

The rise of autonomous AI agents is tightly connected to the broader maturity of enterprise AI, as organizations embed AI deeper into business functions, autonomy becomes the next logical layer, and instead of stopping at insight, enterprises are increasingly looking for systems that can move from understanding to execution.

How It Works

AI agents operate through a structured process that combines perception, reasoning, and action:

Perception and Context Understanding:

LLMs excel at interpreting nuanced and complex queries and can differentiate between similar-sounding requests and understand subtle contextual variations.

The agent gathers information from its environment and available data sources to understand the current situation.

Reasoning and Decision-Making:

The reasoning component is responsible for analyzing data, knowledge, and goals to select the best course of action, and it is a critical part of an AI agent's architecture, enabling it to make smart decisions and solve difficult challenges.

Agents observe context, reason toward goals, evaluate possible actions, execute through tools, and learn from outcomes while escalating to humans when risk demands it.

- Execution and Adaptation:

The autonomous agent learns to adapt to user expectations over time, and the agent's ability to store past interactions in memory and plan future actions encourages a personalized experience and comprehensive responses.

The learning mechanism allows AI to change its behavior based on any new data it receives, transforming raw data into autonomous, actionable intelligence.

Why It Matters

The evolution of AI agents has profound implications for how organizations operate.

Autonomous AI agents are designed to work independently and continuously learn and make decisions without human input, and their ability to process data, adapt to new situations, and integrate with business systems makes them valuable for industries looking to improve efficiency and automation.

According to Microsoft's Work Trend Index, over 80% of business leaders expect AI agents to expand workforce capacity and be integrated into strategy within the next 12 to 18 months.

For the energy sector specifically, the applications are particularly compelling.

Applications of AI agents for energy include grid management, predictive maintenance, and personalized customer support.

Agentic AI is a new paradigm where autonomous, self-learning AI agents independently perceive, decide, and act to optimize energy production, distribution, and consumption without human intervention, and unlike traditional AI, which requires predefined rules and human oversight, Agentic AI continuously learns from its environment, interacts dynamically, and executes actions to achieve optimal energy efficiency, cost savings, and sustainability goals.

Agentic AI represents a fundamental shift in how renewable energy organizations operate, moving beyond analytics and decision support to autonomous, context-aware systems that can plan, decide, and act across the value chain.

However, this autonomy introduces new challenges.

Agentic AI in 2026 is powerful, but not fully autonomous, and without clear rules, monitoring, and testing, an agent will not scale reliably, becoming a true productivity lever only when treated like mission-critical software with clearly defined system boundaries, well-specified interfaces, controlled permissions, monitoring, testing, and data protection.

Related Terms

Large Language Models (LLMs):

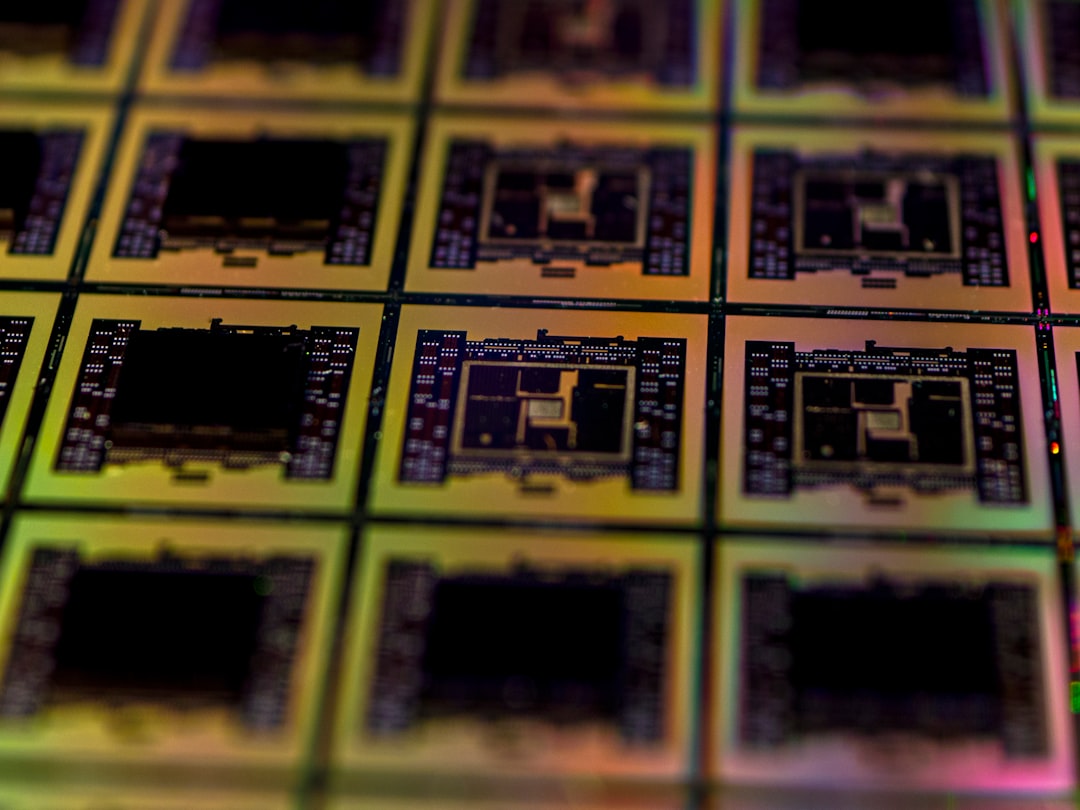

At the core of AI agents are large language models (LLMs), and for this reason, AI agents are often referred to as LLM agents.

Reinforcement Learning:

Reinforcement learning (RL) is a type of machine learning process in which autonomous agents learn to make decisions by interacting with their environment.

Multi-Agent Systems:

Multi-agent systems, where several specialized agents collaborate like a human team are emerging as one of the biggest trends, and businesses can now automate complex workflows that were previously impossible to handle efficiently.

Frequently Asked Questions

How do AI agents differ from traditional AI assistants?

AI agents have the highest degree of autonomy, able to operate and make decisions independently to achieve a goal, while AI assistants are less autonomous, requiring user input and direction, and bots are the least autonomous, typically following pre-programmed rules.

What are the main challenges in deploying AI agents?

The main barrier for shifting Agentic AI from pilots to production is governance, as accountability and control remain unclear for systems that act independently, and consistency is another challenge, with non-deterministic behaviour making outcomes hard to predict and reproduce.

Additionally,

one of the biggest hurdles is the "black box" problem, as many AI agents make decisions that are statistically sound but lack explainability, and in regulated industries like healthcare or finance, this creates friction since stakeholders need to understand why an agent made a certain recommendation.

How should organizations approach AI agent adoption?

Experts strongly recommend starting with "human-in-the-loop" systems and establishing clear governance frameworks before scaling.

Organizations should pilot systems before full deployment by testing AI on smaller projects to measure its effectiveness and fix any issues early on.

Last updated: April 28, 2026. For the latest energy news and analysis, visit stakeandpaper.com.